This article is based on Escaping DevOps hell with Codex , an article by our CTO Jilles van Gurp.

Development teams rarely get blocked by the big idea. They get blocked by the ugly operational detail wrapped around it. A feature is ready, a migration needs to happen, a cluster needs to be upgraded, or a deployment setup needs to be cleaned up. Suddenly the team is no longer building product. It is spending days in shell sessions, YAML, networking rules, permissions, bastion hosts, and configuration drift.

That is why DevOps so often feels disproportionate to the business outcome. The original task may be straightforward: move this system, deploy that service, tighten this rollout, reduce hosting cost, make the environment safer. But every operational step sits near failure modes that matter: downtime, security mistakes, bad backups, partial rollouts, silent misconfiguration, or data loss. Even experienced technical people can lose large amounts of time in this layer.

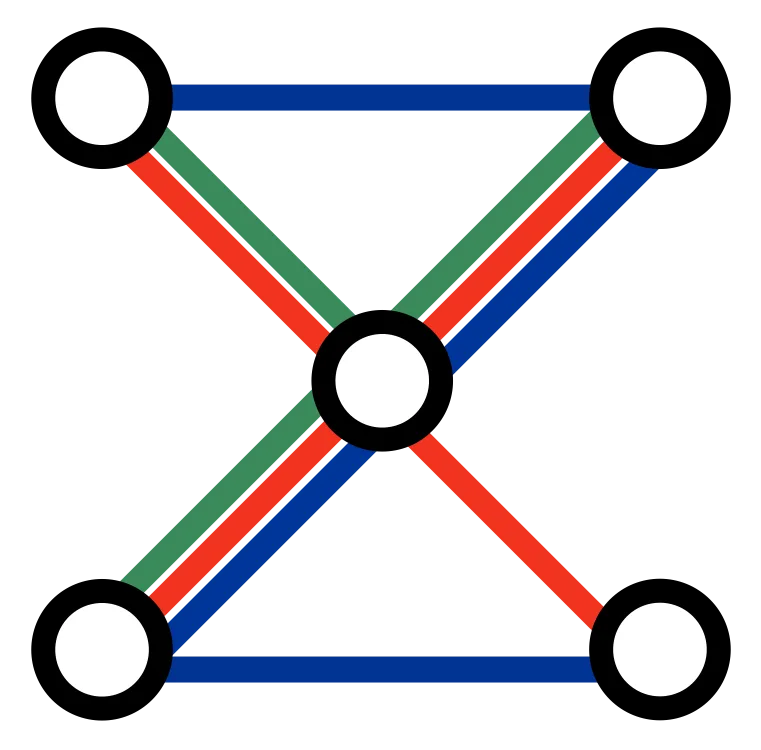

AI changes that, but not in the simplistic way many people assume. The useful pattern is not to hand infrastructure to a chatbot and hope for the best. The useful pattern is to let an AI coding agent work inside a structured environment where it can inspect repositories, understand scripts, edit configuration, run checks, compare results, and document what it learned. In that setup, the agent becomes a practical execution layer for work that used to consume senior attention.

This is particularly effective in development and operations because the work already lives in machine-readable systems. Repositories, infrastructure code, Ansible, Docker, CI scripts, deployment configs, , and validation steps are all things an AI can operate on directly. That matters. A good AI workflow is much easier to build when the work itself is already structured, versioned, and testable.

The catch is that this still needs experienced judgment. The difference between a productive AI-supported migration and a dangerous one is usually not model capability alone. It is workflow design. Somebody needs to define what success looks like, what preflight checks happen first, what approvals are required, what should trigger rollback, and what evidence counts as safe enough to continue. That is where operational maturity still matters.

The teams getting real value from AI in this area are not the ones treating it like a magic answer box. They are the ones turning experience into reusable operating patterns. When a rollout works, they capture the steps. When a failure happens, they improve the instructions and the checks. When the AI learns a reliable fix, they turn that into a repeatable or runbook. Over time, the team is no longer starting from scratch on every messy operational task. That same pattern also sits behind our broader view on AI workflows , where structured tools and repositories give AI much more room to operate safely and usefully.

This is one reason we think coaching matters more than tool access. Most teams can already open an AI product and ask it for help. That is not the hard part. The hard part is teaching development and operations teams how to work with AI in a disciplined way: how to break work into safe steps, how to review outputs, how to keep logs and reports useful, how to build confirmation gates, and how to decide what should remain human-controlled. That operating shift is closely related to what we described in How AI Will Create New Departments Inside Small Companies : the value comes when AI becomes part of the working system, not just an assistant sitting beside it.

Once those habits are in place, the payoff can be substantial. Infrastructure migrations compress. Configuration cleanup gets easier. Repetitive diagnostics become faster. Rollouts become more deliberate instead of more manual. Teams spend less energy on ritualistic troubleshooting and more energy on architecture, delivery, and customer-facing work. That does not eliminate operations work, but it changes the cost structure of doing it well.

For smaller companies, this matters even more. Many do not have a dedicated DevOps team. The burden lands on a CTO, senior developer, platform lead, or whoever is currently least busy, which usually means nobody. AI can give that team more operational reach, but only if the way of working improves with it. Otherwise the company just automates confusion. And once those ways of working are in place, they often contribute to the wider acceleration effect we described in What if time to market was measured in hours or days instead of months or years? .

The practical opportunity is not to replace your development and operations teams. It is to upgrade how they operate. If your developers and operators are capable but still spending too much time in avoidable infrastructure pain, we can help coach the team on agentic ways of working, introduce the right guardrails, and turn repeated DevOps work into safer AI-supported workflows. A good place to start is our Engineering Team Agentic Setup , or simply talk to us if you want to work through your current bottlenecks.